Operation Stargate, the project to make AI an “essential infrastructure” .

Koohan Paik-Mander. 22 Jan 25

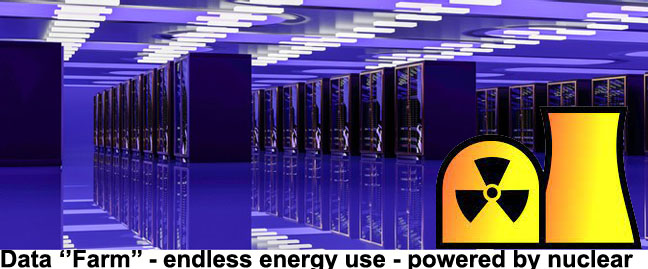

Each data center is filled from ceiling to floor with stacks of metal boxes — computers which process all the AI calculations, which are in the billions per second, and which cause the machines to heat up. To cool them, giant pipes filled with water snake through the basements of these buildings with capillaries of cooling liquid that branch off up to run alongside each of the machines. The water consumption for cooling data centers is enormous.

Essentially, Elon Musk is rejiggering all of America’s society and economy to recalibrate itself around AI. We are expected to give up our land, water and dreams of a livable climate in order to make the data centers operational.

I fear we will very soon be seeing a pivot from endless war on other countries to a focused crushing of the American people, under the banner of Operation Stargate, the project to make AI an “essential infrastructure,” like water or electricity. How that looks on the ground will be enormous data centers that are a half-million square feet each (the size of 2-1/2 Walmart Superstores or 8.5 football fields) constructed in clusters all over the nation. Wherever they are built, all nature perishes, because it is a wholesale smothering of the earth with concrete. They are now building ten of these data centers in Texas, as the first phase of this scourge on the American people. Each one of the ten uses between 20 to upwards of 100 megawatts of power. The entire island of Hawaii, where I live, uses 180 megawatts of energy. This is why they pulled the U.S. out of the Paris Accord, which would be an obstruction to the central plan of this administration to entrench AI infrastructure — arguably more central than deportations or building a wall.

They are proposing using federal lands as well, such as national parks, for these data centers. The first $500 billion was committed to their construction at the first Trump press conference. At the press conference, they didn’t mention any of the above. They just talked about how AI was going to cure cancer. It reminded me of the U.S. general telling the Bikini islanders that the atomic tests were to be “for the good of mankind.”

Each data center is filled from ceiling to floor with stacks of metal boxes — computers which process all the AI calculations, which are in the billions per second, and which cause the machines to heat up. To cool them, giant pipes filled with water snake through the basements of these buildings with capillaries of cooling liquid that branch off up to run alongside each of the machines. The water consumption for cooling data centers is enormous.

They want to cover the continent with these data centers, much like the initiative to cover it with interstate highways. But I don’t see it like that. For me, it is like watching the tracks being lain that would guide train-cars full of Jews and other “undesirables” to the incinerators at Auschwitz and Dachau. It is like watching the gureombi at Gangjeong be blasted, only to be paved over to build a navy base. It is like watching the limestone forest on Guam be razed to construct the live-fire training range. It is like watching the farming villages at Pyeongtaek protest the construction of one of the largest U.S. bases in the world… So I’m used to watching the horror of autocracy smashing nature and community. Only difference is, now it’s in my own country.

“This land is your land, this land is my land.” We used to sing that song in grade school — remember?

Operation Stargate is building not only the hardware of this infrastructure; it is building the software as well. It seeks to fully automate government. It is a libertarian’s wet dream. Its planners in Silicon Valley saw their wealth balloon during the pandemic when everyone went online. The idea behind a fully automated civilization is to revive that scale of profit acceleration for Silicon Valley, by getting society online as much as possible. Remember how every meeting, every lesson, every funeral, every yoga lesson — everything — was done online? They want that back again, but with many added AI “bots”, and what better place to start than government? Just as all the schools were online during the pandemic, the plan is for all of government to be online. Soon, trying to get assistance from City Hall will be as challenging as talking to a person at Yahoo. Maybe they’ll farm out the humans who answer our phone calls to our virtual City Hall with a bunch of underpaid workers in the Philippines or India.

But full automation is not truly human-free. AI requires a constant stream of data to train it. Slave wage workers will be hired in Africa to “annotate”; that is, to sit at computers and click meaningless boxes to train the AI models. The American people will also play a role in training. We’ll be surrounded by a smart grid and smart meters, smart appliances, smart cars, smart air fryers, smart homes — everything “smart”, which really means connected to sensors that record our voices, our images, our behavior patterns and any shifts in the environment. Those recordings provide more data streams to feed AI. Did you know that the reason they no longer manufacture stick shifts is because the sensors can’t translate manual transmission into usable data?

Data is considered more valuable than money these days. In fact, the U.S. government pays for satellite rental (most likely to Musk’s Starlink) in data from surveillance.

In the AI world, the word “surveillance” refers not only to cameras and microphones, but any of the ways these sensors are extracting data. Interaction with government agencies will be more opportunities to collect our data. Every interaction will be surveillance. You see, an AI infrastructure cannot exist without a surveillance infrastructure, a surveillance state.

The most egregious proposal is called Medshield, which was proposed in Congress last term but is certain to return, because it would be a means for the much needed data extraction. It proposes to transform the Department of Health and Human Services into a biowarfare hub. Combined with the “AI first, regulation last” mentality of the incoming administration, it would amount to a full-spectrum assault on Nature and human rights.

Introduced by Sen. Mike Rounds (R-South Dakota), the bill came off the drawing board at the Special Competitive Studies Project (SCSP), a think tank founded by former Google CEO Eric Schmidt.

The bill’s stated purpose was to require a pandemic preparedness and response program. Couched in the euphemism “surveillance,” the bill’s passage would ultimately mandate the continual extraction of DNA samples from hundreds of millions of Americans in order to train AI models, ostensibly to track and monitor biological attacks, and to create antidotes for them. There has been some talk about nationalistically spinning this as “patriotism,” as if it were the postwar Victory Garden movement. But nothing in the bill’s text hints at the egregious Constitutional violations to privacy and individual agency that this would pose.

Nor does the Act explicate that, as a general rule, all supposedly “defensive” weapons can be inversely deployed for offense. Building an arsenal of millions of new synthetic life forms to defend against a biological weapons attack has the potential, if not the covert intent, to irreversibly unleash new viruses, bacteria, proteins and other organisms into the ecological systems. Case in point: we saw how the “defensive” development of the atom bomb played out.

The MedShield Act would employ technology that works with large-language model AI much in the same way that ChatGPT operates. The large-language model is comprised of a maze of networks with billions of artificial neurons. It is trained by inputting hundreds of millions, perhaps billions, of relevant data points. (This is why a surveillance state is essential for any nation wishing global AI dominance — to continually feed the AI’s hungry maw.) Once trained by the data set to recognize patterns and relationships, a query can be entered into the AI, which then processes it by making millions of calculations before spitting out its answer.

For example, generative AI could be queried to “make a Covid-like virus that doesn’t show symptoms until at least five days after contraction.” Or “what should go into a vaccine to inoculate against a flu virus that was engineered to last 30 days?” The possibilities for biological warfare are endless, which is why weapons technology companies like Palantir (maker of Gaza-tested Lavender AI) is studying how to use AI to create and mitigate biological threats. Los Alamos Labs is teaming up with Open AI to do the same.

According to the SCSP, MedShield is necessary in order to keep ahead of China in the AI arms race. Here’s how the SCSP explains Medshield:What is MedShield? Imagine a system that could protect us from dangerous pathogens and bioweapons as effectively as our military would defend against inbound ballistic missiles (NORAD) or nuclear attacks (STRATCOM). That’s the idea behind MedShield. This potential national technology program is a bold, fully-integrated, AI-enabled system-of-systems that could neutralize a biological threat, whether from a state, non-state actor, or nature (biological threats go beyond pathogens into five basic types). MedShield would create one holistic “kill chain” against biological threats.

It is a discomfiting notion that protocols would be implemented that would connect our national healthcare department to the Pentagon, and would be modeled after the NORAD and STRATCOM war commands. Could a more sinister, inappropriate framing exist for the office charged with the well-being of our women, men and children? I think not.

Because all generative AI — not only MedShield — requires a surveillance infrastructure of continual data extraction. All that data could come from anywhere, both legally or, as is more often the case, surreptitiously from everyday citizens, indigenous peoples, prisoners, marginalized communities, or the Global South. The human-rights threats of an unregulated, comprehensive, AI-driven federal government would make the East German Stasi look like Munchkinland.

Essentially, Elon Musk is rejiggering all of America’s society and economy to recalibrate itself around AI. We are expected to give up our land, water and dreams of a livable climate in order to make the data centers operational. We are expected to let our government transform into a data extraction apparatus. AI runs on energy, but it lives on data. It can’t live without extracting our data. Our role in society is being reduced to “data resource.” A commodity. If we serve AI, as is the plan, we are not citizens but slaves.

The endless war agenda of the Democrats will be allowed to wind down by Silicon Valley because they can make just as much money, if not more, installing infrastructures for domestic terror instead. Unlike the legacy warmongers like Lockheed and Raytheon, Elon Musk and his digital cabal don’t care who or what they destroy. Everything we are is fodder for their profit machine.

The only answer is to return to an embodied existence, offline. Ditch the digital. Resist smart anything. Oppose the AI-ification of government.

Weatherwatch: Could small nuclear reactors help curb extreme weather? There’s a credibility gap.

As natural disasters make need to cut CO2 emissions clearer than ever, energy demand of AI systems is about to soar.

Paul Brown 27 Jan 25 – https://www.theguardian.com/world/2025/jan/17/weatherwatch-could-small-nuclear-reactors-help-curb-extreme-weather

Violent weather events have been top of the news agenda for weeks, with scientists and fact-based news organisations attributing their increased severity to climate breakdown. The scientists consulted have all emphasised the need to cut greenhouse gas emissions.

At the same time there are predictions about artificial intelligence and datacentres urgently needing vast amounts of new electricity sources to keep them running. Small modular nuclear reactors (SMRs) have been touted as the green solution. The reports suggest that SMRs are just around the corner and will be up and running in the 2030s. Google first ordered seven, followed by Amazon, Microsoft and Meta each ordering more.

With billions of dollars on offer, many startup and established nuclear companies are getting in on the act. More than 90 separate designs for SMRs are being marketed across the world. Many governments, including the UK, are pouring money into design competitions and other ways to incentivise development.

In all this there is a credibility gap. None of the reactor designs have left the drawing board, prototypes have not been built or safety checks begun, and costs are at best optimistic guesses. SMRs may succeed, but let big tech gamble their spare billions on them while the rest of us are building cheap renewables we know work.

In Flamanville, EPR vibrations weigh down EDF

Blast 15th Jan 2025

https://www.blast-info.fr/articles/2025/a-flamanville-les-vibrations-de-lepr-plombent-edf-27fa5zyHQ6mpDzOKgti6kw

Last week, Luc Rémont, CEO of EDF received a worrying report from the engineers working on the Flamanville EPR. It reveals a recurring problem of excessive vibrations. And indicates that he does not know whether the EPR will be able to operate at full power. Revelations.

At EDF, troubles are flying in squadrons. This Tuesday, January 14, the Court of Auditors published a new report on the Flamanville EPR . The venerable institution on Rue Cambon (Paris) now estimates the final cost of the project at 23.7 billion euros. An amount that is significantly higher than the previous assessment made by the Court in 2020: 19.1 billion.

Kicking the donkey, the report specifies that “the calculations made by the Court result in a mediocre profitability for Flamanville 3” : the tiny margin that EDF could generate will not be enough to repay the cost of the loans! For that to happen, the EPR must one day operate at full power. And of that, even the EDF teams are no longer really convinced.

The scene takes place at a dinner party in Paris late last week. “We were in a meeting in the CEO’s office and everything was going well. But then he received a report from Flamanville and the atmosphere suddenly cooled,” says a senior executive of the electrician present at the meeting. If Luc Rémont, the CEO, did not fall off his seat when he read the report, he came close.

The cause? The engineers working on the reactor’s start-up have a doubt. And a big one. “They don’t know if the EPR will be able to operate at full power,” says this senior executive.

This question, which exists among many employees who worked on the nightmarish reactor construction site (twelve years behind schedule), is now shared by the teams who took charge of the reactor. And it is based on an observation: contrary to what EDF’s communication claims, the vibration problems affecting the primary circuit of the reactor are far from being resolved. “The report confirms that there are still problems with excessive vibrations,” says the decidedly very talkative manager.

At the meeting of the local information committee for the Flamanville nuclear power plant in April 2014, held a few days before the ASN authorised EDF to install nuclear fuel in the tank, the electrician had nevertheless brushed aside the issue of vibrations, stating, clearly a little too quickly, that everything was sorted .

But already in the floors of the general management in Paris, the knives are sharpening and the hunt for the culprit is open. Who will wear the hat? One name is on everyone’s mind: that of Alain Morvan , the director of the EPR project until last October, accused in veiled terms of having hidden too much dust under the carpet.

Contacted by email on Tuesday 14 January late in the morning, EDF indicated that it was sticking to its construction cost of 13.2 billion (excluding interim interest). But it refused to comment on our information on the vibrations. Questioned the same day also by email, the Nuclear Safety and Radiation Protection Authority (ASNR), resulting from the merger of the ASN with the IRSN, did not respond to us.

UK will explore nuclear power for new AI data centre plan

The UK is planning special districts for constructing data centers and

will explore dedicating nuclear energy to the sites as part of a Labour

government project to boost technology growth and the ecosystem for

artificial intelligence. These “AI Growth Zones” will include enhanced

access to electricity and easier planning approvals for data centers, the

government said on Sunday. It said the first such zone will be in Culham,

home of the UK Atomic Energy Authority.

Bloomberg 12th Jan 2025,

https://www.bloomberg.com/news/articles/2025-01-12/uk-will-explore-nuclear-power-for-new-ai-data-center-plan

While Los Angeles burns, AI fans the flames

Artificial intelligence is a water-guzzling industry hastening future climate crises from California’s own backyard.

By Schuyler Mitchell , Truthout, January 11, 2025

“………………………………………………. Trump’s latest smear campaign is little more than political football. But the renewed attention on California’s water does highlight ongoing tensions over the conservation and management of this finite resource. As the climate crisis worsens, it’s expected to exacerbate heat waves and droughts, bringing water shortages and increasingly devastating fires like those currently scorching southern California. The situation in Los Angeles is already a catastrophe. Climate change-induced water shortages will make imminent disasters even worse.

In the face of this grim reality, it’s worth revisiting one of the major water-guzzling industries that’s hastening future crises from California’s own backyard: artificial intelligence (AI).

Silicon Valley is the epicenter of the global AI boom, and hundreds of Bay Area tech companies are investing in AI development. Meanwhile, in the southern region of the state, real estate developers are rushing to build new data centers to accommodate expanded cloud computing and AI technologies. The Los Angeles Times reported in September that data center construction in Los Angeles County had reached “extraordinary levels,” increasing more than sevenfold in two years.

This technology’s environmental footprint is tremendous. AI requires massive amounts of electrical power to support its activities and millions of gallons of water to cool its data centers. One study predicts that, within the next five years, AI-driven data centers could produce enough air pollution to surpass the emissions of all cars in California.

Data centers on their own are water-intensive; California is home to at least 239. One study shows that a large data center can consume up to 5 million gallons of water per day, or as much as a town of 50,000 people. In The Dalles, Oregon, a local paper found that a Google data center used over a quarter of the city’s water. Artificial intelligence is even more thirsty: Reporting by The Washington Post found that Meta used 22 million liters of water simply training its open source AI model, and UC Riverside researchers have calculated that, in just two years, global AI use could require four to six times as much water as the entire nation of Denmark.

Many U.S. data centers are based in the western portion of the country, including California, where wind and solar power is more plentiful — and where water is already scarce. In 2022, a researcher at Virginia Tech estimated that about one-fifth of data centers in the U.S. draw water from “moderately to highly stressed watersheds.”

According to the Fifth National Climate Assessment, the U.S. government’s leading report on climate change, California is among the top five states suffering economic impacts from climate crisis-induced natural disaster. California already is dealing with the effects of one water-heavy industry; the Central Valley, which feeds the whole country, is one of the world’s most productive agricultural regions, and the Central Valley aquifer ranks as one of the most stressed aquifers in the world. ClimateCheck, a website that uses climate models to predict properties’ natural disaster threat levels, says that California ranks number two in the country for drought risk.

In August 2021, the U.S. Bureau of Reclamation declared the first-ever water shortage on the Colorado River, which supplies water to California — including roughly a third of southern California’s urban water supply — as well as six other states, 30 tribal nations and Mexico. The Colorado River water allotments have been highly contested for more than a century, but the worsening climate crisis has thrown the fraught agreements into sharp relief. Last year, California, Nevada and Arizona agreed to long-term cuts to their shares of the river’s water supply.

Despite the precarity of the water supply, southern California’s Imperial Valley, which holds the rights to 3.1 million acres of Colorado River water, is actively seeking to recruit data centers to the region.

“Imperial Valley is a relatively untapped opportunity for the data center industry,” states a page on the Imperial Valley Economic Development Corporation’s website. “With the lowest energy rates in the state, abundant and inexpensive Colorado River water resources, low-cost land, fiber connectivity and low risk for natural disasters, the Imperial Valley is assuredly an ideal location.” A company called CalEthos is currently building a 315 acre data center in the Imperial Valley, which it says will be powered by clean energy and an “efficient” cooling system that will use partially recirculated water. In the bordering state of Arizona, Meta’s Mesa data center also draws from the dwindling Colorado River.

The climate crisis is here, but organizers are not succumbing to nihilism. Across the country, community groups have fought back against big tech companies and their data centers, citing the devastating environmental impacts. And there’s evidence that local pushback can work. In the small towns of Peculiar, Missouri, and Chesterton, Indiana, community campaigns have halted companies’ data center plans.

“The data center industry is in growth mode,” Jon Reigel, who was involved in the Chesterton fight, told The Washington Post in October. “And every place they try to put one, there’s probably going to be resistance. The more places they put them the more resistance will spread.” https://truthout.org/articles/while-los-angeles-burns-ai-fans-the-flames/?utm_source=Truthout&utm_campaign=3634e1951f-EMAIL_CAMPAIGN_2025_01_11_08_34&utm_medium=email&utm_term=0_bbb541a1db-3634e1951f-650192793

Lawsuit challenges NRC on SMR regulation

Friday, 10 January 2025, https://www.world-nuclear-news.org/articles/lawsuit-challenges-nrc-on-smr-regulation

The States of Texas and Utah and microreactor developer Last Energy Inc are challenging the US regulator over its application of a rule it adopted in 1956 to small modular reactors and research and test reactors.

Under the US Nuclear Regulatory Commission (NRC) Utilization Facility Rule, all US reactors are required to obtain NRC construction and operating licences regardless of their size, the amount of nuclear material they use or the risks associated with their operation. The plaintiffs say this imposes “complicated, costly, and time-intensive requirements that even the smallest and safest SMRs and microreactors – down to those not strong enough to power an LED lightbulb” must satisfy to secure the necessary licences. This does not only affect microreactors: existing research and test reactors such as those at the universities in both Texas and Utah face “significant costs” to maintain their NRC operating licences, the plaintiffs say.

In the filing, Last Energy – developer of the PWR-20 microreactor – says it has invested “tens of millions of dollars” in developing small nuclear reactor technology, including USD2 million on manufacturing efforts in Texas alone, and has agreements to develop more than 50 nuclear reactor facilities across Europe. But although it has a “preference” to build in the USA, “Last Energy nonetheless has concluded it is only feasible to develop its projects abroad in order to access alternative regulatory frameworks that incorporate a de minimis standard for nuclear power permitting”.

Noting that only three new commercial reactors have been built in the USA over the past 28 years, the plaintiffs say building a new commercial reactor of any size in the country has become “virtually impossible” due to the rule, which it says is a “misreading” of the NRC’s own scope of authority.

They are asking the court to set aside the rule, “at least as applied to certain small, non-hazardous reactors”, and exempt their research reactors and Last Energy’s small modular reactors (SMRs) from the commission’s licensing requirements.

Houston, Texas-based law firm King & Spalding said the lawsuit, if it is successful, would “mark a turning point” in the US nuclear regulatory framework – but warns that it could also create greater uncertainty as advanced nuclear technologies get closer to commercial readiness.

“Regardless the outcome, the Plaintiffs’ lawsuit highlights the challenges in applying the Utilization Facility Rule to the advanced nuclear reactors now under development in the US,” the company said in in analysis released on 9 January.

But the NRC is already addressing the issue: in 2023, it began the rulemaking process to establish an optional technology-inclusive regulatory framework for new commercial advanced nuclear reactors, which would include risk-informed and performance-based methods “flexible and practicable for application to a variety of advanced reactor technologies”. SECY-23-0021: Proposed Rule: Risk-Informed, Technology-Inclusive Regulatory Framework for Advanced Reactors is currently open for public comment until 28 February, and the NRC has said it expects to issue a final rule “no later than the end of 2027”.

The lawsuit has been filed with the US District Court in the Eastern District of Texas.

Deep Fission to supply Endeavour data centers with 2GW of nuclear energy from “mile-deep” SMR

The first reactors are expected to come online in 2029

DCD, January 07, 2025 By Zachary Skidmore

Deep Fission, a small modular nuclear reactor (SMR) developer, has partnered with Endeavour Energy, a US sustainable infrastructure developer, to develop and deploy its technology at scale.

As per the agreement, the partners have committed to co-developing 2GW of nuclear energy to supply Endeavour’s global portfolio of data centers which operate under the Endeavour Edged brand. The first reactors are expected to be operational by 2029.

The Deep Fission Borehole Reactor 1 (DFBR-1) is a pressurized water reactor (PWR) that produces 15MWt (thermal) and 5MWe (electric) and has an estimated fuel cycle of between ten to 20 years…………………………………

Deep Fission plans to release white papers throughout the regulatory approval process for discussion direction on key issues surrounding the SMR………………………………..

Based in Berkley, California, Deep Fission was founded in 2023. In August last year, it announced a $4 million pre-seed funding round to accelerate efforts in hiring, regulatory approval, and the commercialization of its SMR.

Edged, Endeavour’s data center arm, will be the primary beneficiary of the power produced by DFBR-1.

The company, which was formed in 2021, has data centers across the US and the Iberian peninsula, with facilities in operation or development in Madrid, Barcelona, Lisbon, and across the US, including Missouri, Arizona, Texas, Georgia, Iowa, Ohio, and Illinois.

The company specializes in data centers built for high-density artificial intelligence, which utilize a waterless cooling system…………………….. https://www.datacenterdynamics.com/en/news/deep-fission-to-supply-endeavour-data-centers-with-2gw-of-nuclear-energy-from-mile-deep-smr/

Nuclear energy groups race to develop ‘microreactors’

Companies vie to create small plants for deployment to sites from data centres to oil platforms

Ft.com Malcolm Moore and George Steer in London, 9 Jan 25

Nuclear energy companies are trying to shrink reactors to the size of shipping containers in a bid to compete with electric batteries as a source of zero-carbon energy. Led by Westinghouse, the race to develop “microreactors” is based on the notion they can replace diesel and gas generators used by everything from data centres to remote off-grid communities to offshore oil and gas platforms.

Microreactors have a much smaller output of up to 20MW, enough to power roughly 20,000 homes, and are likely to operate like large batteries, with no control room or workers on site. The reactors would be transported to a site, plugged in and left to run for several years before being taken back to their manufacturer for refuelling. Westinghouse in December won approval from US nuclear regulators for a control system that will eventually allow the 8MW eVinci to be operated remotely. The reactor, which has minimal moving parts, uses pipes filled with liquid sodium to draw heat from its nuclear fuel and transfer it to the surrounding air, which can then run a turbine to produce electricity or be pumped into heating systems.

“Our goal is to be able to operate autonomously from a central location where we can just simply monitor a fleet of reactors that are deployed around the world,” said Ball…………………………………………

Ball said two of the target markets for eVinci reactors were data centres and the oil and gas industry, both on and offshore. He said the ability to run several microreactors side by side would make data centres more resilient than with a single source of energy.

…………………………………………………………………. But J Clay Sell, chief executive of X-energy, said the market for microreactors was “still emerging”. “We’ve probably invested as much as anyone in the sector,” he said. “But when you go down in size, the economics become much more challenged. You have to get to a greater level of scale for microreactors to become economic.”

………………………………………. there are questions over how to build, transport and run microreactors safely, said Ronan Tanguy, programme lead for safety and licensing at the World Nuclear Association. Regulators still have to draw up rules around whether microreactors can be operated remotely and how to make them safe from cyber attacks. Rules are also needed around transporting them, especially across national borders, and whether they should be fuelled in a factory or on site. Given their smaller size, they may also pose an easier target for nuclear fuel theft…………………. https://www.ft.com/content/a4c98cb2-797a-4943-9643-2fd75accfd59

Could AI soon make dozens of billion-dollar nuclear stealth attack submarines more expensive and obsolete?

By Wayne Williams, 5 Jan 25, https://www.techradar.com/pro/could-ai-soon-make-dozens-of-billion-dollar-nuclear-stealth-attack-submarines-more-expensive-and-obsolete

Artificial intelligence can detect undersea movement better than humans.

AI can process far more data from a far more sensors than human operators can ever achieve

But the game of cat-and-mouse means that countermeasures do exist to confuse AI

Increase in compute performance and ubiquity of always-on passive sensors need also be accounted for.

The rise of AI is set to reduce the effectiveness of nuclear stealth attack submarines.

These advanced billion-dollar subs, designed to operate undetected in hostile waters, have long been at the forefront of naval defense. However, AI-driven advancements in sensor technology and data analysis are threatening their covert capabilities, potentially rendering them less effective.

An article by Foreign Policy and IEEE Spectrum now claims AI systems can process vast amounts of data from distributed sensor networks, far surpassing the capabilities of human operators. Quantum sensors, underwater surveillance arrays, and satellite-based imaging now collect detailed environmental data, while AI algorithms can identify even subtle anomalies, such as disturbances caused by submarines. Unlike human analysts, who might overlook minor patterns, AI excels at spotting these tiny shifts, increasing the effectiveness of detection systems.

Game of cat-and-mouse

AI’s increasing role could challenge the stealth of submarines like those in the Virginia-class, which rely on sophisticated engineering to minimize their detectable signatures.

Noise-dampening tiles, vibration-reducing materials, and pump-jet propulsors are designed to evade detection, but AI-enabled networks are increasingly adept at overcoming these methods. The ubiquity of passive sensors and continuous improvements in computational performance are increasing the reach and resolution of these detection systems, creating an environment of heightened transparency in the oceans.

Despite these advances, the game of cat-and-mouse persists, as countermeasures are, inevitably, being developed to outwit AI detection.

These tactics, as explored in the Foreign Policy and IEEE Spectrum piece, include noise-camouflaging techniques that mimic natural marine sounds, deploying uncrewed underwater vehicles (UUVs) to create diversions, and even cyberattacks aimed at corrupting the integrity of AI algorithms. Such methods seek to confuse and overwhelm AI systems, maintaining an edge in undersea warfare.

As AI technology evolves, nations will need to weigh up the escalating costs of nuclear stealth submarines against the potential for their obsolescence. Countermeasures may provide temporary degree of relief, but the increasing prevalence of passive sensors and AI-driven analysis suggests that traditional submarine stealth is likely to face diminishing returns in the long term.

The Quiet Crisis Above: Unveiling the Dark Side of Space Militarization

By Justin James McShane, GeopoliticsUnplugged, Nov 28, 2024

Summary:

In this episode, we examine the growing militarization of space, focusing on the development and testing of anti-satellite weapons (ASATs) by various nations, including the U.S., Russia, China, and India. We detail the history of space militarization, from the Cold War to the present, highlighting the dangers of space debris and the inadequacy of existing treaties like the Outer Space Treaty in addressing modern threats. Different types of ASATs are described, both kinetic and non-kinetic, along with electronic warfare systems used for disrupting satellites. We also discuss the lack of international cooperation and robust enforcement mechanisms to prevent an arms race in space, emphasizing the need for new agreements to ensure the peaceful use of outer space. Ultimately, we warn of the potential for space to become a new theater of conflict. cooperation?”………………………………………………….

https://geopoliticsunplugged.substack.com/p/ep93-the-quiet-crisis-above-unveiling-ed9

Departing Air Force Secretary Will Leave Space Weaponry as a Legacy

msn, by Eric Lipton, 30 Dec 24

WASHINGTON — Weapons in space. Fighter jets powered by artificial intelligence.

As the Biden administration comes to a close, one of its legacies will be kicking off the transformation of the nearly 80-year-old U.S. Air Force under the orchestration of its secretary, Frank Kendall.

When he leaves office in January — after more than five decades at the Defense Department and as a military contractor, including nearly four years as Air Force secretary — Mr. Kendall, 75, will have set the stage for a transition that is not only changing how the Air Force is organized but how global wars will be fought.

One of the biggest elements of this shift is the move by the United States to prepare for potential space conflict with Russia, China or some other nation.

In a way, space has been a military zone since the Germans first reached it in 1944 with their V2 rockets that left the earth’s atmosphere before they rained down on London, causing hundreds of deaths. Now, at Mr. Kendall’s direction, the United States is preparing to take that concept to a new level by deploying space-based weapons that can disable or disrupt the growing fleet of Chinese or Russian military satellites………………………

Perhaps of equal significance is the Air Force’s shift under Mr. Kendall to rapidly acquire a new type of fighter jet: a missile-carrying robot that in some cases could make kill decisions without human approval of each individual strike.

In short, artificial-intelligence-enhanced fighter jets and space-based warfare are not just ideas in some science fiction movie. Before the end of this decade, both are slated to be an operational part of the Air Force because of choices Mr. Kendall made or helped accelerate.

The Pentagon is the largest bureaucracy in the world. But Mr. Kendall has shown, more than most of its senior officials, that it too can be forced to innovate.

“It is big,” said Richard Hallion, a military historian and retired senior Pentagon adviser, describing the change underway at the Air Force. “We have seen the maturation of a diffuse group of technologies that, taken together, have forced a transformation of the American military structure.”

Mr. Kendall is an unusual figure to be the top civilian executive at the Air Force, a job he was appointed to by President Biden in 2021, overseeing a $215 billion budget and 700,000 employees…………….

Mr. Kendall, who has a folksy demeanor more like a college professor than a top military leader, comes at the job in a way that recalls his graduate training as an engineer.

He gets fixated on both the mechanics and the design process of the military systems his teams are building at a cost of billions of dollars. Mr. Kendall and Gen. David Allvin, the department’s top uniformed officer, have called this effort “optimizing the Air Force for great power competition.”………………….

Mr. Kendall has taken these innovations — built out during earlier waves of change at the Air Force — and amped up the focus on autonomy even more through a program called Collaborative Combat Aircraft.

These new missile-carrying robot drones will rely on A.I.-enhanced software that not only allows them to fly on their own but to independently make certain vital mission decisions, such as what route to fly or how best to identify and attack enemy targets.

The plan is to have three or four of these robot drones fly as part of a team run by a human-piloted fighter jet, allowing the less expensive drone to take greater risks, such as flying ahead to attack enemy missile defense systems before Navy ships or piloted aircraft join the assault.

Mr. Kendall, in an earlier interview with The Times, said this kind of device would require society to more broadly accept that individual kill decisions will increasingly be made by robots……………….

These new collaborative combat aircraft — which will cost as much as about $25 million each, compared to the approximately $80 million price for a manned F-35 fighter jet — are being built for the Air Force by two sets of vendors. One group is assembling the first of these new jets while a second is creating the software that allows them to fly autonomously and make key mission decisions on their own.

This is also a major departure for the Air Force, which usually relies on a single prime contractor to do both, and a sign of just how important the software is — the brain that will effectively fly these robotic fighter jets………………………………………

Space is now a fighting zone, Mr. Kendall acknowledged, like the oceans of the earth or battlefields on the ground.

The United States, Russia and China each tested sending missiles into space to destroy satellites starting decades ago, although the United States has since disavowed this kind of weapon because of the destructive debris fields it creates in orbit.

So during his tenure, the Air Force started to build out a suite of what Mr. Kendall called “low-debris-causing weapons” that will be able to disrupt or disable Chinese or other enemy satellites, the first of which is expected to be operational by 2026.

Mr. Kendall and Gen. Chance Saltzman, the chief of Space Operations, would not specify how these American systems will work. But other former Pentagon officials have said they likely will include electronic jamming, cyberattacks, lasers, high-powered microwave systems or even U.S. satellites that can grab or move enemy satellites.

The Space Force, over the last three years, has also been rapidly building out its own new network of low-earth-orbit satellites to make the military gear in space much harder to disable, as there will be hundreds of cheaper, smaller satellites, instead of a few very vulnerable targets.

Mr. Kendall said when he first came into office, there was an understandable aversion to weaponizing space, but that now the debate about “the sanctity or purity of space” is effectively over.

“Space is a vacuum that surrounds Earth,” Mr. Kendall said. “It’s a place that can be used for military advantage and it is being used for that. We can’t just ignore that on some obscure, esoteric principle that says we shouldn’t put weapons in space and maintain it. That’s not logical for me. Not logical at all. The threat is there. It’s a domain we have to be competitive in.” https://www.msn.com/en-us/news/politics/departing-air-force-secretary-will-leave-space-weaponry-as-a-legacy/ar-AA1wE4iS

Here comes Yakutia, Russia’s newest nuclear icebreaker

Rosatomflot now has eight nuclear-powered icebreakers in operation, the highest number since Soviet times.

Thomas Nilsen, Barents Observer 30 December 2024

The flag-raising ceremony happened at the Baltic Shipyard in St. Petersburg on December 28. It took four and a half years to build the Yakutia and the icebreaker is the first made with mostly Russian-made components.

Testing took place in the Gulf of Finland earlier in December and the powerful vessel is now delivered to Rosatomflot, the state-owned company in charge of sailings and infrastructure along the Northern Sea Route.

The three previous icebreakers of the same class had both Western and Ukrainian made parts. With sanctions implemented and the engine factory in Ukraine bombed, the shipyard had to look for import substitutes domestically.

“The sanctions restrictions that we faced did not prevent us from ensuring high-quality and timely construction of the order,” said Deputy General Director Andrei Buzinov with the Baltic Shipyard at the ceremony.

The Yakutia is powered by two RITM-200 reactors and will join the fleet of nuclear-powered icebreakers sailing out of Rosatomflot’s base in Murmansk.

The three sister vessels of the same class, the Arktika, Sibir and Ural are already crushing the ice along the Northern Sea Route, mainly for Russia’s LNG export to reach the markets.

The fleet also includes four older nuclear-powered icebreakers, the Yamal and 50 Let Poedy, and the two Finnish built Taymyr and Vaygash. They have all got their service life prolonged.

Not since the late 1980s have more nuclear-powered icebreakers been in operation. Out at sea, the winter season 2024/2025 will be a record as several of the icebreakers in the late Soviet times stayed at port in Murmansk although they officially were on active duty. ……………..

The flag raising ceremony took place 65 years after the Soviet Union’s first nuclear-powered icebreaker, the Lenin, was launched from the yard in Severodvinsk. Lenin became the world’s first civilian nuclear-powered vessel and is today moored in Murmansk as a museum open to the public.

The two last icebreakers of the new class will also be named after past dictators. The Leningrad and Stalingrad are expected to be put in service in 2028 and 2030. Before that, the Chukotka will come in 2026.

If no unforeseen delays happen.

Last week, the Defense Ministry’s cargo ship Ursa Major sank in the Mediterranean with two 45-tons hatches to cover the reactors on the Rossiya icebreaker currently under construction at the yard in Bolshoi Kamen near Vladivostok.

The giant icebreaker is already many years behind schedule and is unlikely to be start sailing the Northern Sea Route’s East Arctic waters in 2027 as stipulated. https://www.thebarentsobserver.com/news/here-comes-yakutia-russias-newest-nuclear-icebreaker/422559

Big tech, bigger lies

by beyondnuclearinternational, https://beyondnuclearinternational.org/2024/11/10/big-tech-bigger-lies/

Microsoft, Google and Amazon are bragging they will use nuclear energy to power their energy needs, but it’s mainly hype or worse, writes M.V. Ramana.

In the last couple of months, Microsoft, Google, and Amazon, in that order, made announcements about using nuclear power for their energy needs. Describing nuclear energy using questionable adjectives like “reliable,” “safe,” “clean,” and “affordable,” all of which are belied by the technology’s seventy-year history, these tech behemoths were clearly interested in hyping up their environmental credentials and nuclear power, which is being kept alive mostly using public subsidies.

Both these business conglomerations—the nuclear industry and its friends and these ultra-wealthy corporations and their friends—have their own interests in such hype. In the aftermath of catastrophic accidents like Chernobyl and Fukushima, and in the face of its inability to demonstrate a safe solution to the radioactive wastes produced in all reactors, the nuclear industry has been using its political and economic clout to mount public relations campaigns to persuade the public that nuclear energy is an environmentally friendly source of power.

Tech giants like Microsoft, Amazon, and Google, too, have attempted to convince the public they genuinely cared for the environment and really wanted to do their bit to mitigate climate change. In 2020, for example, Amazon pledged to reach net zero by 2040. Google went one better when its CEO declared that “Google is aiming to run our business on carbon-free energy everywhere, at all times” by 2030. Not that they are on any actual trajectory to meeting these targets.

Why are they making such announcements?

Greenwashing environmental impacts

The reasons underlying these companies investing in such PR campaigns is not hard to discern. There is growing awareness of the tremendous environmental impacts of the insatiable appetite for data from these companies, as well as the threat they pose to already inadequate efforts to mitigate climate change.

Earlier this year, the Wall Street company Morgan Stanley estimated that data centers will “produce about 2.5 billion metric tons of carbon dioxide-equivalent emissions through the end of the decade”. Climate scientists have warned that unless global emissions decline sharply by 2030, we are unlikely to limit global temperature rise to 1.5 degrees Celsius, a widely shared target. Even without the additional carbon dioxide emitted into the air as a result of data centers and their energy demand, the gap between current emissions and what is required is yawning.

But it is not just the climate. As calculated by a group of academic researchers, the exorbitant amounts of water required in the United States “to operate data centers, both directly for liquid cooling and indirectly to produce electricity” contribute to water scarcity in many parts of the country. This is the case elsewhere, too, and communities in countries ranging from Ireland to Spain to Chile are fighting plans to site data centers.

Then, there are the indirect impacts on the climate. Greenpeace documented, for example, that “Microsoft, Google, and Amazon all have connections to some of the world’s dirtiest oil companies for the explicit purpose of getting more oil and gas out of the ground and onto the market faster and cheaper.” In other words, the business models adopted by these tech behemoths depend on fossil fuels being used for longer and in greater quantities.

In addition to the increasing awareness about the impacts of data centers, one more possible reason for cloud companies to become interested in nuclear power might be what happened to cryptocurrency companies. Earlier this decade, these companies, too, found themselves getting a lot of bad publicity due to their energy demands and resulting emissions. Even Elon Musk, not exactly known as an environmentalist, talked about the “great cost to the environment” from cryptocurrency.

The environmental impacts of cryptocurrency played some part in efforts to regulate these. In September 2022, the White House put out a fact sheet on the climate and energy implications of Crypto-assets, highlighting President Biden’s executive order that called on these companies to reduce harmful climate impacts and environmental pollution. China even went as far as to banning cryptocurrency, and its aspirations to reducing its carbon emissions was one factor in this decision.

Crypto bros, for their part, did what cloud companies are doing now: make announcements about using nuclear power. Amazon, Google, and Microsoft are now following that strategy to pretend to be good citizens. However, the nuclear industry has its reasons for welcoming these announcements and playing them up.

The state of nuclear power

Strange as it might seem to folks basing their perception of the health of the nuclear industry on mainstream media, that technology is actually in decline. The share of global electricity produced by nuclear reactors has decreased from 17.5% in 1996 to 9.15% in 2023, largely due to the high costs of and delays in building and operating nuclear reactors.

A good illustration is the Vogtle nuclear power plant in the state of Georgia. When the utility company building the reactor sought permission from the Nuclear Regulatory Commission in 2011, it projected a total cost of $14 billion, and “in-service dates of 2016 and 2017” for the two units. The plant became operational only this year, after the second unit came online in March 2024, at a total cost of at least $36.85 billion.

Given this record, it is not surprising that there are no orders for any more nuclear plants.

As it has been in the past, the nuclear industry’s answer to this predicament is to advance the argument that new nuclear reactor designs would address all these concerns. But that has, yet again, proved not to be the case. In November 2023, the flagship project of NuScale, the small modular reactor design promoted as the leading one of its kind, collapsed because of high costs.

Supporters of nuclear power are now using another time-tested tactic to promote the technology: projecting that energy demand will grow so much that no other source of power will be able to meet these needs. For example, UK energy secretary Ed Davey resorted to this gambit in 2013 when he said that the Hinkley Point C nuclear plant was essential to “keep the lights on” in the country.

Likewise, when South Carolina Electric & Gas Company made its case to the state’s Public Service Commission about the need to build two AP1000 reactors at its V.C. Summer site—this project was subsequently abandoned after over $9 billion was spent—it forecast in its “2006 Integrated Resource Plan” that the company’s energy sales would increase by 22 percent between 2006 and 2016, and by nearly 30 percent by 2019.

This is the argument that the growth in data centres, propped up in part by the hype about generative artificial intelligence, has allowed proponents of nuclear energy to put forward. It remains to be seen whether this hype about generative AI actually materializes into a long-term sustainable business: see, for example, Ed Zitron’s meticulously documented argument for why OpenAI and Microsoft are simply burning billions of dollars and why their business model might “simply not be viable”.

In the case of the V.C. Summer project, South Carolina Electric & Gas found that its energy sales actually declined by 3 percent compared to 2006 by the time 2016 rolled around. Of course, that did not matter, because shareholders had already received over $2.5 billion in dividends and company executives had received millions of dollars in compensation, according to Nuclear Intelligence Weekly, a trade publication.

One wonders which executives and shareholders are going to receive a bounty from this round of nuclear hype.

What about emissions?

Will the investments in nuclear power by companies like Google, Microsoft, and Amazon help reduce emissions anytime soon?

The project expected to have the shortest timeline is the restart of the Three Mile Island Unit 1 reactor, which Constellation Energy projects will be ready in 2028. But if the history of reactor commissioning is anything to go by, that deadline will come and go without any power flowing from it.

Restarting a nuclear plant that has been shutdown has never been done before. In the case of the Diablo Canyon nuclear plant in California, which hasn’t been shut down but was slated for decommissioning in 2024-25 till Governor Gavin Newsom did a volte-face, the Chair of the Diablo Canyon Independent Safety Committee explained why doing so was very difficult: “so many different programs and projects and so on have been put in place over the last half a dozen years predicated on that closure in 2024-25 and each one of those would have to be evaluated and some of them are okay, and some of them won’t be and some are going to be a real stretch and some are going to cost money and some of them aren’t going to be able to be done maybe”.

The cost of keeping Diablo Canyon open has been estimated by the plant’s owner at $8.3 billion and by independent environmental groups at nearly $12 billion. There are no reliable cost estimates for reopening Three Mile Island, but Constellation Energy, the plant’s owner, is already seeking a taxpayer-subsidized loan that would likely save the company $122 million in borrowing costs.

One must also remember that Microsoft already announced an agreement with Helion Energy, a company backed by billionaire Peter Thiele, to get nuclear fusion power by 2028. The chances of that happening are slim at best. In 2021, Helion announced that it had raised $500 million to build its fusion generation facility that would demonstrate “net electricity production” in three years, i.e., “in 2024”. That hasn’t happened so far. But going back further, one can see a similar and unfulfilled claim from 2014: then, the company’s chief executive had told the Wall Street Journal that the company hoped that its product would generate more energy than it would use “in the next three years” (i.e., in 2017). It is quite likely that Microsoft’s decision-makers knew of how unlikely it is that Helion will be able to supply nuclear fusion power by 2028. The publicity value is the most likely reason for announcing an agreement with Helion.

What about the small modular nuclear reactor designs—X-energy and Kairos—that Amazon and Google are betting on? Don’t hold your breath.

X-energy is an example of a high-temperature gas-cooled reactor design that dates back to the 1940s. There have been four reactors based on similar concepts that were operated commercially, two in Germany and two in the United States, respectively, and test reactors in the United Kingdom, Japan, and China. Each of these reactors proved problematic, suffering a variety of failures and unplanned shutdowns. The latest reactor with a similar design was built in China. Its performance leaves much to be desired: within about a year of being connected to the grid, its power output was reduced by 25 percent of the design power capacity, and even at this lowered capacity, it operated in 2023 with a load factor of just 8.5 percent.

Kairos, on the other hand, will be challenged by its choice of molten salts as coolant. These are chemically corrosive, and decades of search have identified no materials that can survive for long periods in such an environment without losing their integrity. The one empirical example of a reactor that used molten salts dates back to the 1960s, and this experience proved very problematic, both when the reactor operated and in the half-century thereafter, because managing the radioactive wastes produced before 1970 continued to be challenging.

Simply throwing money will not overcome these problems that have to do with fundamental physics and chemistry.

Just a dangerous distraction

Although Amazon, Google, and Microsoft claim to be investing in nuclear energy to meet the needs of AI, the evidence suggests that their real motive is to greenwash themselves.

Their investments are small and completely inadequate with relation to how much is needed to build a reactor. But their investments are also very small compared to the bloated revenues of these corporations. So, from the viewpoint of top executives, investing in nuclear power must seem a cheap way to reduce bad publicity about their environmental footprints. Unfortunately, “cheap” for them does not translate to cheap for the rest of us, not to mention the burden to future generations of human beings from worsening climate change and, possibly, increased production of radioactive waste that will stay hazardous for hundreds of thousands of years.

Because nuclear power has been portrayed as clean and a solution to climate change, announcements about it serve as a flashy distraction to focus public attention on. Meanwhile, these companies continue to expand their use of water and draw on coal and especially natural gas plants for their electricity. This is the magician’s strategy: misdirecting the audience’s attention while the real trick happens elsewhere. Their talk about investing in nuclear power also distracts from the conversations we should be having about whether these data centers and generative AI are socially desirable in the first place.

There are many reasons to oppose and organize against the wealth and power exercised by these massive corporations, such as their appropriation of user data to engage in what has been described as surveillance capitalism, their contracts with the Pentagon, and their support for Israel’s genocide and apartheid. Their investment into nuclear technology, and more importantly, hyping it up, offers one more reason. It is also a chance to establish coalitions between groups involved in very different fights.

M. V. Ramana is the Simons Chair in Disarmament, Global and Human Security at the School of Public Policy and Global Affairs, University of British Columbia. His latest book is Nuclear Is Not The Solution. The Folly of Atomic Power In The Age Of Climate Change, available from Verso Books.

As construction of first small modular reactor looms, prospective buyers wait for the final tally.

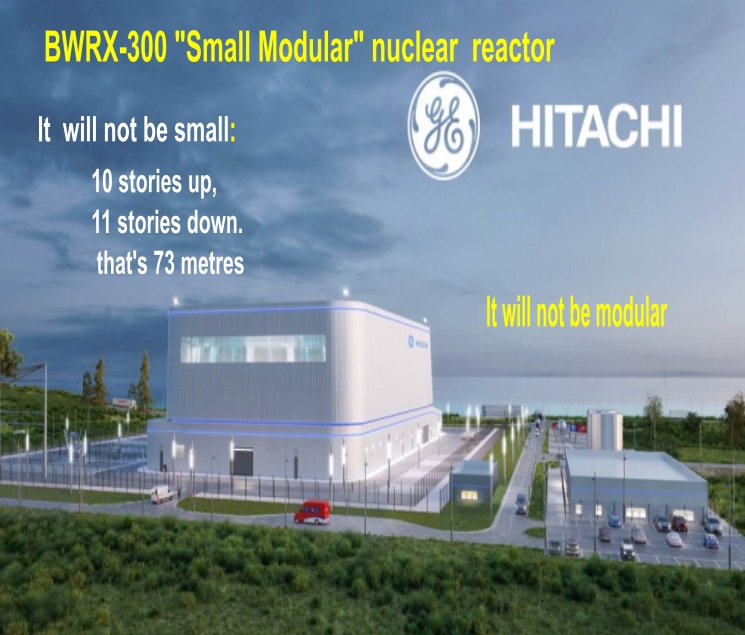

the first BWRX-300 could cost more than five times GE-Hitachi’s original target price.

emerging consensus that SMRs are not economic

“The nuclear people don’t operate in a vacuum, they operate in competition to other technologies,………… “The cost for solar is going down.”

Matthew McClearn, Dec. 27, 2024 , https://www.theglobeandmail.com/business/article-as-construction-of-first-small-modular-reactor-looms-prospective/

The race to construct Canada’s first new nuclear power reactor in 40 years seems to have passed a point of no return. This summer, Ontario Power Generation completed regrading the site for its Darlington New Nuclear Project in Clarington, Ont., and started drilling for the reactor’s retaining wall, which will be buried partly underground. At a regulatory hearing, OPG’s chief executive officer Ken Hartwick, who will retire at the end of this year, promised that this reactor will be “the first of many to come.”

But that will depend on a crucial yet-to-be-revealed detail: its price tag.

It’s no exaggeration to say that the world is waiting for it. The new Darlington reactor would be the first BWRX-300, a small modular reactor (SMR) being designed by an American vendor, GE-Hitachi Nuclear Energy, and the first SMR built in any Western country. Other prospective buyers include the Tennessee Valley Authority (TVA), SaskPower and Great British Nuclear. More BWRX-300s are in early planning stages in Poland and the Czech Republic.

Crucially, however, OPG is the first and only utility worldwide to bind itself contractually to build a BWRX-300. A report published by the U.S. Department of Energy in September said American utilities are waiting to see pricing and construction schedules for early units, and would “prefer to be fifth.” SaskPower also wants to avoid the risks associated with building a “first of a kind” reactor; it won’t decide until 2029 and it hopes SMRs will be less expensive than traditional nuclear plants.

Scheduled for release this winter, the Darlington SMR’s estimated cost will speak volumes about whether SMRs can deliver on their many promises. Yet there are early indications of serious sticker shock: Recently published estimates from the TVA suggest the first BWRX-300 could cost more than five times GE-Hitachi’s original target price. How will OPG and GE-Hitachi drive pricing far below the TVA’s estimate? And if they cannot, what then will be the prospects for SMRs?

Ditching the scaling law

SMRs were conceived as an antidote to the hefty price tags that brought reactor construction to a standstill in Western countries for decades.

Previously, the nuclear industry relied heavily on something called economies of scale or the “scaling law”: As a power plant’s size increases, capital costs also rise, but in a less than linear fashion. So vendors designed ever-larger reactors. Reactors under construction today average about one gigawatt, roughly three times the BWRX-300’s output. They can cost more than US$10-billion, leaving only the largest government-backed utilities as potential purchasers.

SMRs represent a promising but untested new approach to manufacturing reactors – one that emphasizes simplification and mass production techniques. The key term is modular: Rather than building monolithic, one-of-a-kind plants, the industry hoped instead to churn out substantially identical factory-built units; repetition would help drive down costs, as it had for competing technologies such as wind turbines and solar panels.

But modularity requires multiple orders, which in turn demands competitive pricing. Through early discussions with potential customers, GE-Hitachi executives understood the BWRX-300 had to be priced low, not only in absolute terms, but also relative to other power-generation technologies. They told audiences it would cost less than US$1-billion, or US$2,250 per kilowatt hour of power generation capacity – low enough to compete with natural gas-fired power plants.

“The total capital cost of one plant has to be less than $1-billion in order for our customer base to go up,” Christer Dahlgren, a GE-Hitachi executive, said during a talk in Helskini in March, 2019.

Shrinking a giant

GE-Hitachi’s designers began by shrinking a behemoth: the 1,500-megawatt Economic Simplified Boiling Water Reactor (ESBWR). Their objective was to reduce the volume of the building housing the reactor by 90 per cent, to greatly reduce the amount of concrete and steel required during construction.

This was accomplished primarily through eliminating safety systems. Pressure relief valves, common in traditional reactors, were removed. In place of two completely separate emergency shutdown systems, as is customary, the BWRX-300 would have two systems that would propel the same set of control rods into the reactor’s core. GE-Hitachi emphasized that the BWRX-300 featured “passive” safety systems that would keep the reactor safe during an accident, and its simplicity reduced the need for redundant engineered systems.

Sean Sexstone, head of GE-Hitachi’s advanced nuclear team, said the entire facility – which includes the reactor building, the control room and the turbine hall – will measure just 145 metres by 85 metres.

“You can walk that site in a minute-and-a-half,” he said.

GE-Hitachi also sought substitutes for concrete. The reactor building is to be constructed using factory-made steel panels that will be shipped to the site, assembled into modules and lifted by crane into position. These modules essentially serve as forms into which concrete is poured. These steel plates are as strong as concrete, OPG says, yet eliminate the need to use rebar extensively. This approach “lends itself to more modularity, more work in a factory, versus more work in the field,” Mr. Sexstone explained.

The Darlington SMR will be erected using a technique called “open-top construction.” The reactor building’s roof won’t be installed until the very last. The building will be constructed upward, floor by floor, with large components lowered in by crane rather than being moved through doors and hatches.

Many of the BWRX-300’s components would be identical to those used in previous GE power plants, such as its control rods, fuel assemblies and steam separators. Its steam turbine would be the same one used in natural-gas-fired plants. And the plant could be run by as few as 75 staff, far below the nearly 1,000 employed at large single-reactor Canadian nuclear plants.

Historically, utilities tended to build bespoke nuclear plants meeting highly individualized requirements. The result: In the United States alone there are more than 50 commercial reactor designs. Few designs were built twice, limiting opportunities to learn through repetition.

GE-Hitachi intended the BWRX-300 to be highly standardized, constructible in multiple countries with as few tweaks as possible. It assembled an international coterie of utility partners, including OPG, the TVA and a Polish company named Synthos Green Energy, which last year agreed to jointly contribute to the estimated US$400-million cost of the SMR’s standardized design.

Subo Sinnathamby, OPG’s chief projects officer, acknowledged in an interview that the first SMR will be expensive. But lessons learned from building it, including newly identified opportunities for additional modularization, will be applied to three subsequent units at Darlington, bringing down overall costs.

“For us, success is going to be sticking to how we have executed megaprojects at OPG, using the same processes and principles,” she said, citing the continuing refurbishment of Darlington’s existing reactors.

“The last thing we want to do is get into construction and then stop the work force.”

GE-Hitachi’s emphasis on lowering plant costs has been validated by many independent observers, who regard it as essential to SMRs’ future prospects.

In a report published in May, Clean Prosperity, a climate policy think tank, concluded that the BWRX-300 “is the strongest candidate” among SMRs to experience continued cost reductions as more were built – but only at the right price, which it pegged at about $3.3-billion. “Cost curves will only become possible for the BWRX-300 in Ontario and beyond,” it warned, “with a final price tag that is low enough to compel additional expansion.”

In September, the U.S. Department of Energy published a report examining the prospects for widespread deployment of reactors across the U.S., an expansion it strongly supported. But to drive down costs, SMR vendors needed to move more than half of the overall spending on a project into standardized factory-like production – a tall order.

Similarly, a report published last year by the U.S. National Academy of Sciences argued that if nuclear plants are to contribute meaningfully to future electricity systems, they must be cost-competitive with other low-emission technologies. It looked at so-called overnight capital costs – what costs would be if construction were completed overnight, with no charges for financing and no consideration of how long it will last. The academy said capital costs should be US$2,000 or less per kilowatt of generating capacity. At between US$4,000 and US$6,000 a kilowatt, reactors might still be competitive if costs unexpectedly rose for renewable technologies.

Enter the TVA.

In an integrated resource plan published in September, the TVA estimated that a first light water SMR would have an overnight capital cost of nearly US$18,000 a kilowatt.

At that pricing, the first Darlington SMR would cost more than $8-billion. That’s about 10 times the cost of a similarly sized natural-gas-fired plant: SaskPower’s recently completed Great Plains Power Station, a 377 MW natural-gas-fired plant in Moose Jaw, cost just $825-million.

Oregon-based NuScale Power Corp. has already discovered what happens when pricing falls in this range. Founded in 2007, its 77-MW NuScale Power Module was the first SMR to be licensed by regulators in a Western country. But last year its flagship project, undertaken with the Utah Association of Municipal Power Systems (UAMPS), was cancelled after cost soared to about US$20,000 a kilowatt.

There are several important caveats about the TVA’s estimate.

Greg Boerschig, a TVA vice-president, described it as a “Class 5″ estimate. According to standard global practices, cost estimation is based on a five-level system. Class 5 is the least detailed and reliable and is intended for planning purposes; actual costs could be half that much, or double.

The estimate is far higher than the TVA would have liked, Mr. Boerschig said. But since OPG is further along in deploying the BWRX-300, he added, it has a better sense of the reactor’s cost.

“We’re a couple of years behind them,” Mr. Boerschig acknowledged.

Indeed, according to a presentation by Aecon Group Inc., a partner on the Darlington SMR, a Class 4 estimate had already been completed as of February this year. Ms. Sinnathamby said OPG is working on a Class 3 estimate.

“Our number is going to be very specific: What is it going to cost us to build, on this location, these four SMRs?” she said.

Another caveat is that the BWRX-300 was only one of several reactors represented in the estimate, which was based on the TVA’s experience exploring potential SMRs at its Clinch River site near Oak Ridge, Tenn., and by examining recently completed nuclear construction projects.

OPG might enjoy certain cost advantages over the TVA. The Darlington Nuclear Generating Station is a complex that was built during the 1980s and early 1990s on the shore of Lake Ontario, the proximity of which could make cooling reactors there cheaper. Clinch River is a greenfield site, whereas Darlington already has four operating reactors.

“That will automatically reduce the cost to OPG relative to TVA,” said Koroush Shirvan, a professor of energy studies at the Massachusetts Institute of Technology, who has studied the BWRX-300’s economics.

Nonetheless, opponents and skeptics of SMRs in general, and the Darlington SMR in particular, have embraced TVA’s estimate.

Chris Keefer, an emergency medicine physician, has advocated passionately for refurbishment of Ontario’s existing nuclear power plants, which are all based on Canada’s homegrown reactor design, the Candu. He has also argued for modernizing the Candu design and building more. He said the TVA’s estimates reflect a more honest assessment of SMR pricing than Canadians received in the past.

“It points to this emerging consensus that SMRs are not economic, and that shouldn’t be a surprise,” he said.

“TVA, I think they’ve got several hundreds of millions of dollars in the development process on this reactor. I wouldn’t say that those numbers are naive.”

Prof. Shirvan said his own cost estimate for the BWRX-300 reactor is “in line” with the TVA’s.

Chris Gadomski, head of nuclear research at BloombergNEF, said TVA’s estimates are discouragingly high, and imply that reactor sales might be less than anticipated. Contributing factors might include high labour costs in North America, and recent high inflation and high financing costs, factors he expects will persist.

“The nuclear people don’t operate in a vacuum, they operate in competition to other technologies,” he said.

“The cost for solar is going down. The cost of batteries, we anticipate, is going down. And so, when you’re looking at spending billions of dollars and all of a sudden the price tag gets so large, people will say: ‘Hey, listen, you’ve got to look at other options, or buy less of this.’ ”

If there is a silver lining, the TVA estimated follow-on SMRs would cost substantially less than the first, at roughly US$12,500 a kilowatt. But that’s still more than double the upper limit the U.S. National Academy of Sciences deemed necessary to support widespread SMR adoption.

We might learn in a few months whether GE-Hitachi and OPG have succeeded in bringing the BWRX-300’s cost down. But a review of regulatory applications and other documents hint at why the original US$1-billion target price might be difficult to realize.

Prof. Shirvan said GE-Hitachi’s original plan – to slim the reactor down by removing safety systems – encountered resistance from regulators in Canada and the U.S. “When you strip out most of the safety system, you have to come up with very good reasoning how that’s justified,” he said. GE-Hitachi started adding some of those systems back in, he said, which caused the BWRX-300’s reactor building’s diameter to swell.

This dramatic increase, Mr. Keefer said, has greatly reduced the BWRX-300’s economic attractiveness.

“Proportionately, you’re actually doing a lot more civil works than you would for a large reactor,” he said. “And that actually means that the whole SMR paradigm, which is to get all the work into a factory, goes away.”

(GE-Hitachi denied that the plant had grown. “While the design has matured, the overall footprint of the BWRX-300 plant has not changed significantly,” Mr. Sexstone said.)

OPG’s regulatory documents also make clear that some modular construction techniques it seeks to employ at Darlington are in their infancy. As recently as last year, most of the walls and floors of the SMR building were to have been built using a technique developed in Britain known as Steel Bricks. GE-Hitachi recently dropped Steel Bricks in favour of a similar approach known as Diaphragm Plate Steel Composite.

Moreover, OPG’s published construction plans show that the reactor building will be built largely below-grade, requiring significant excavation including into bedrock. Tunnel boring machines will be used to excavate more tunnels, tens of metres wide, to convey cooling water to and from Lake Ontario. Make no mistake, the Darlington SMR remains a complex capital project.

To date there have been no indications that pricing might derail the Darlington SMR. Ontario’s government appears willing to pay a significant premium: It hopes that as a first mover, OPG will be well-poised to sell equipment and expertise in other countries.

During a stump speech in Scarborough in December, Energy Minister Stephen Lecce said Ontario was keen to sell its technology and expertise for building SMRs abroad.

“I was just in Poland and Estonia, literally selling Canadian small modular reactors that will be built here, exported there,” he said.

Yet Mr. Lecce has also vowed to keep Ontarians’ electricity bills low, an objective high SMR price tags might compromise.

GE-Hitachi maintains its creation’s pricing will stack up favourably.

“I think we’re in a really good spot to feel very comfortable about this unit being probably the most cost competitive SMR in the market,” Mr. Sexstone said. “I think your readers will be pleasantly surprised.”

Ms. Sinnathamby, for OPG’s part, said actual costs to construct BWRX-300s should be considerably lower than TVA’s estimate.

“The TVA numbers can only come down,” she said. “That’s how conservative, in our mind, those numbers are.”

AI goes nuclear

Big tech is turning to old reactors (and planning new ones) to power the energy-hungry data centers that artificial intelligence systems need. The downsides of nuclear power—including the potential for nuclear weapons proliferation—have been minimized or simply ignored.

Bulletin, By Dawn Stover, December 19, 2024

When Microsoft bought a 407-acre pumpkin farm in Mount Pleasant, Wisconsin, it wasn’t to grow Halloween jack-o’-lanterns. Microsoft is growing data centers—networked computer servers that store, retrieve, and process information. And those data centers have a growing appetite for electricity.

Microsoft paid a whopping $76 million for the pumpkin farm, which was assessed at a value of about $600,000. The company, which has since bought other nearby properties to expand its footprint to two square miles, says it will spend $3.3 billion to build its 2-million-square-foot Wisconsin data center and equip it with the specialized computer processors used for artificial intelligence (AI).

Microsoft and OpenAI, maker of the ChatGPT bot, have talked about building a linked network of five data centers—the Wisconsin facility plus four others in California, Texas, Virginia, and Brazil. Together they would constitute a massive supercomputer, dubbed Stargate, that could ultimately cost more than $100 billion and require five gigawatts of electricity, or the equivalent of the output of five average-size nuclear power plants.

Microsoft, Amazon, Apple, Google, Meta, and other major tech companies are investing heavily in data centers, particularly “hyperscale” data centers that are not only massive in size but also in their processing capabilities for data-intensive tasks such as generating AI responses. A single hyperscale data center can consume as much electricity as tens or hundreds of thousands of homes, and there are already hundreds of these centers in the United States, plus thousands of smaller data centers.

In just the past year, US electric utilities have nearly doubled their estimates of how much electricity they’ll need in another five years. Electric vehicles, cryptocurrency, and a resurgence of American manufacturing are sucking up a lot of electrons, but AI is growing faster and is driving the rapid expansion of data centers. A recent report by the global investment bank Goldman Sachs forecasts that data centers will consume about 8 percent of all US electricity in 2030, up from about 3 percent today